Attention

LSTM

在介绍attention之前,为了更好地了解attention的工作机制,我们首先简单介绍一下RNN以及它的变形LSTM。

上图是一个标准的RNN网络,X0->Xt为从初始时刻到最终t时刻之间的t个输入,在机器翻译任务中这便代表了原文中的t个单词。为了模拟翻译时人们参照上下文信息进行翻译的习惯将decoder前一时刻的输出作为隐藏层与当前时刻输入合并后作为输入计算当前时刻的隐藏层输出。理论上在机器翻译任务中上述结构任何时刻的输出都与当前时刻的输入以及先前输入的数据有关但在实际训练过程中这一结构保留先前时刻输入的能力相当有限,一旦距离稍远便会导致之前的信息被遗忘,而无法获得正确的翻译结果。在这样的环境之下大家对RNN进行了改进得到了LSTM这种特殊的RNN结构。

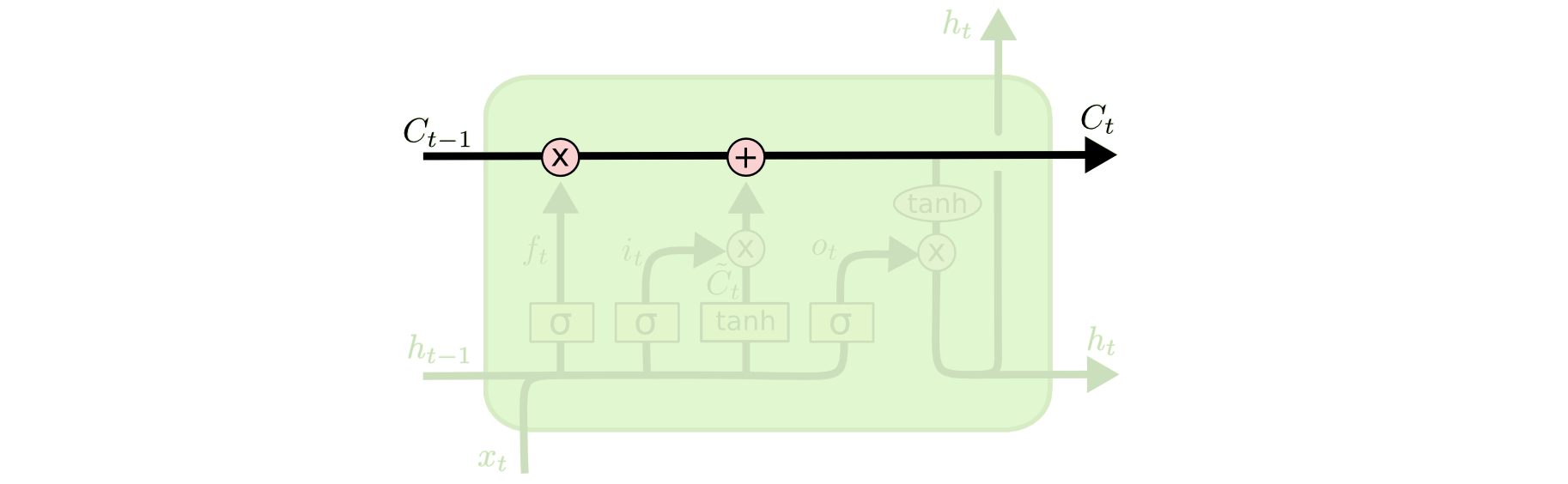

The key to LSTMs is the cell state, the horizontal line running through the top of the diagram.

The cell state is kind of like a conveyor belt. It runs straight down the entire chain, with only some minor linear interactions. It’s very easy for information to just flow along it unchanged.

The LSTM does have the ability to remove or add information to the cell state, carefully regulated by structures called gates.

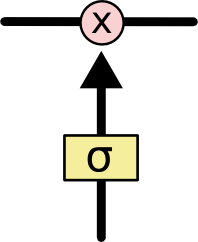

Gates are a way to optionally let information through. They are composed out of a sigmoid neural net layer and a pointwise multiplication operation.

The sigmoid layer outputs numbers between zero and one, describing how much of each component should be let through. A value of zero means “let nothing through,” while a value of one means “let everything through!”

An LSTM has three of these gates, to protect and control the cell state.

Step-by-Step LSTM Walk Through

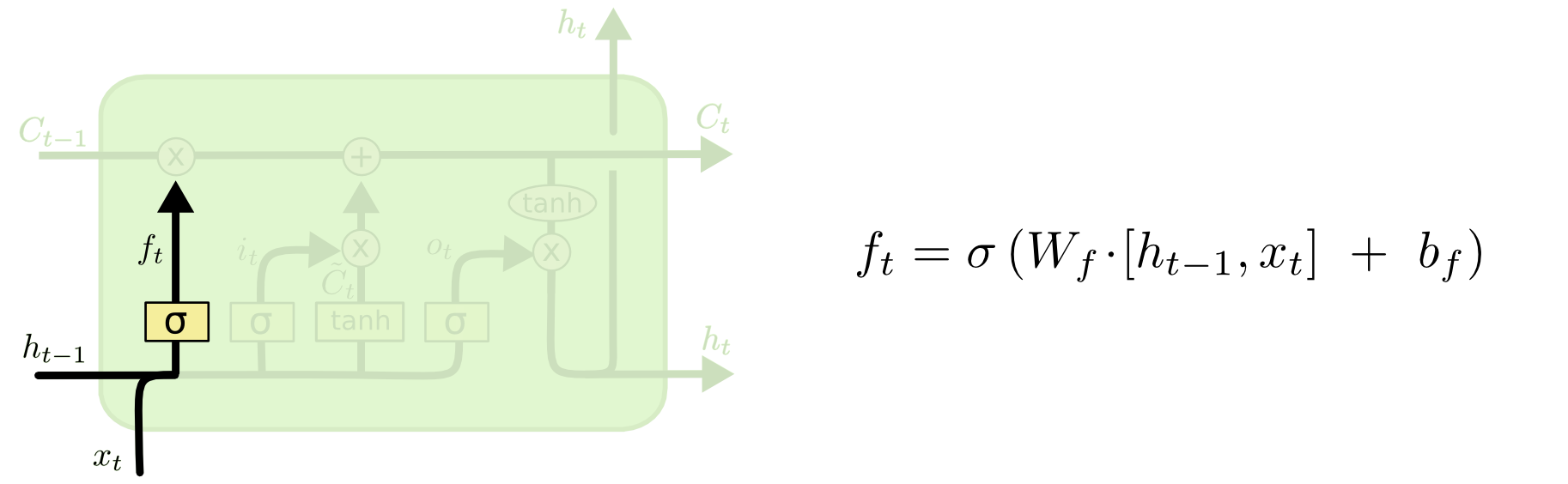

The first step in our LSTM is to decide what information we’re going to throw away from the cell state. This decision is made by a sigmoid layer called the “forget gate layer.” It looks at and , and outputs a number between and for each number in the cell state . A represents “completely keep this” while a represents “completely get rid of this.”

Let’s go back to our example of a language model trying to predict the next word based on all the previous ones. In such a problem, the cell state might include the gender of the present subject, so that the correct pronouns can be used. When we see a new subject, we want to forget the gender of the old subject.

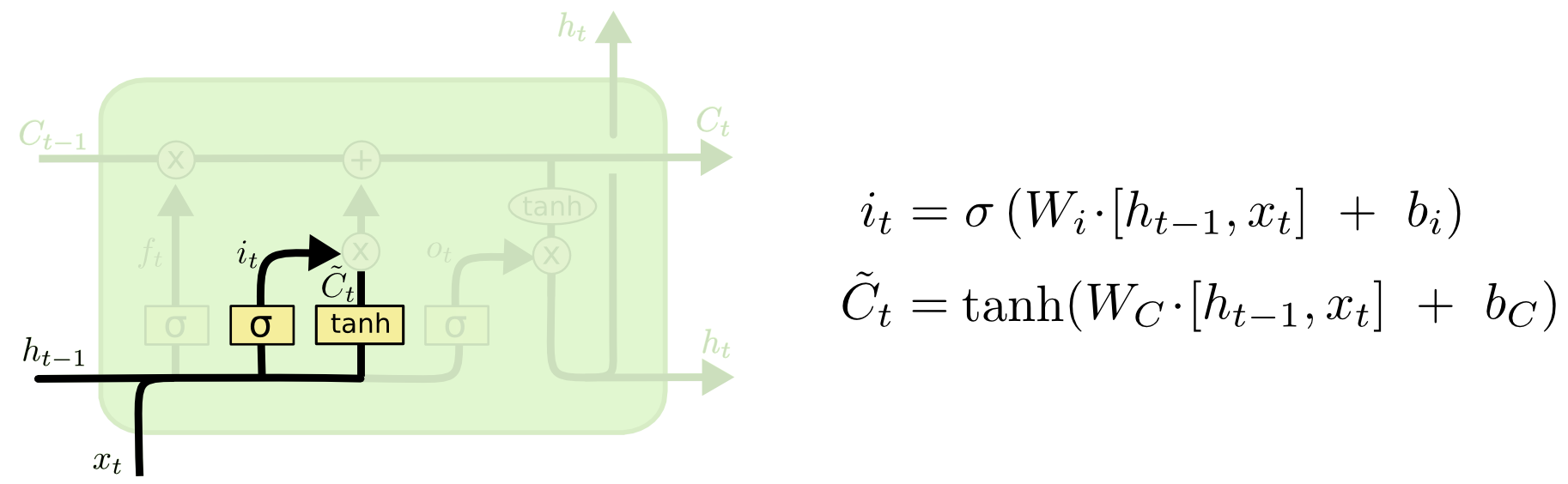

The next step is to decide what new information we’re going to store in the cell state. This has two parts. First, a sigmoid layer called the “input gate layer” decides which values we’ll update. Next, a tanh layer creates a vector of new candidate values, , that could be added to the state. In the next step, we’ll combine these two to create an update to the state.

In the example of our language model, we’d want to add the gender of the new subject to the cell state, to replace the old one we’re forgetting.

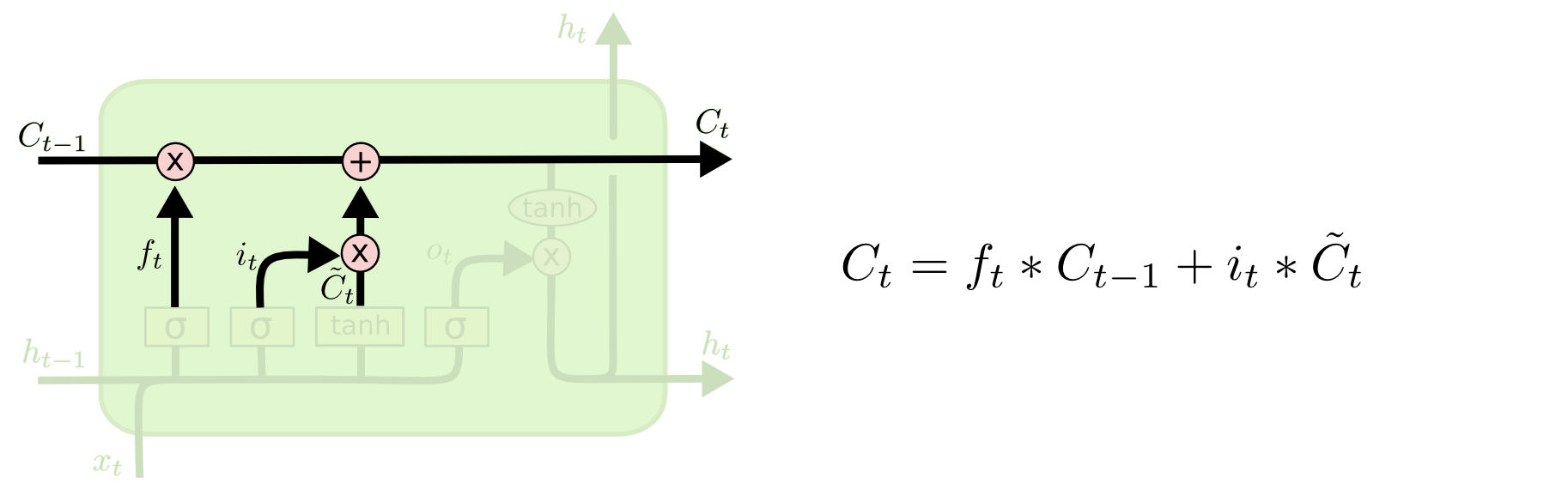

It’s now time to update the old cell state, , into the new cell state . The previous steps already decided what to do, we just need to actually do it.

We multiply the old state by , forgetting the things we decided to forget earlier. Then we add . This is the new candidate values, scaled by how much we decided to update each state value.

In the case of the language model, this is where we’d actually drop the information about the old subject’s gender and add the new information, as we decided in the previous steps.

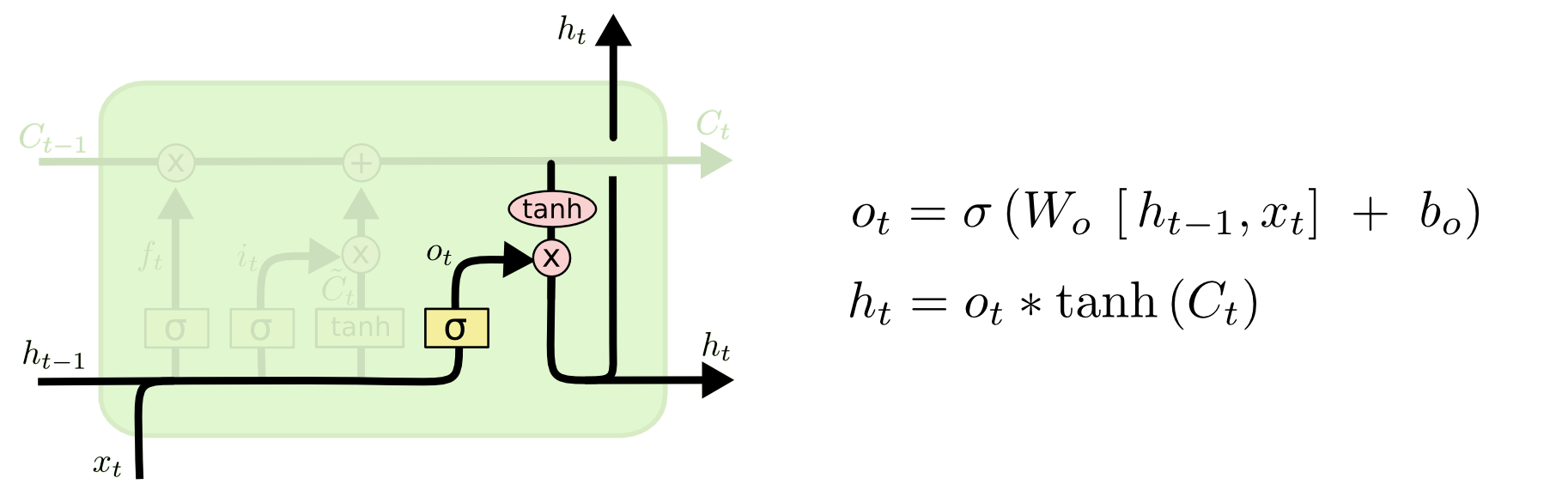

Finally, we need to decide what we’re going to output. This output will be based on our cell state, but will be a filtered version. First, we run a sigmoid layer which decides what parts of the cell state we’re going to output. Then, we put the cell state through (to push the values to be between and ) and multiply it by the output of the sigmoid gate, so that we only output the parts we decided to.

For the language model example, since it just saw a subject, it might want to output information relevant to a verb, in case that’s what is coming next. For example, it might output whether the subject is singular or plural, so that we know what form a verb should be conjugated into if that’s what follows next.

Variants on Long Short Term Memory

What I’ve described so far is a pretty normal LSTM. But not all LSTMs are the same as the above. In fact, it seems like almost every paper involving LSTMs uses a slightly different version. The differences are minor, but it’s worth mentioning some of them.

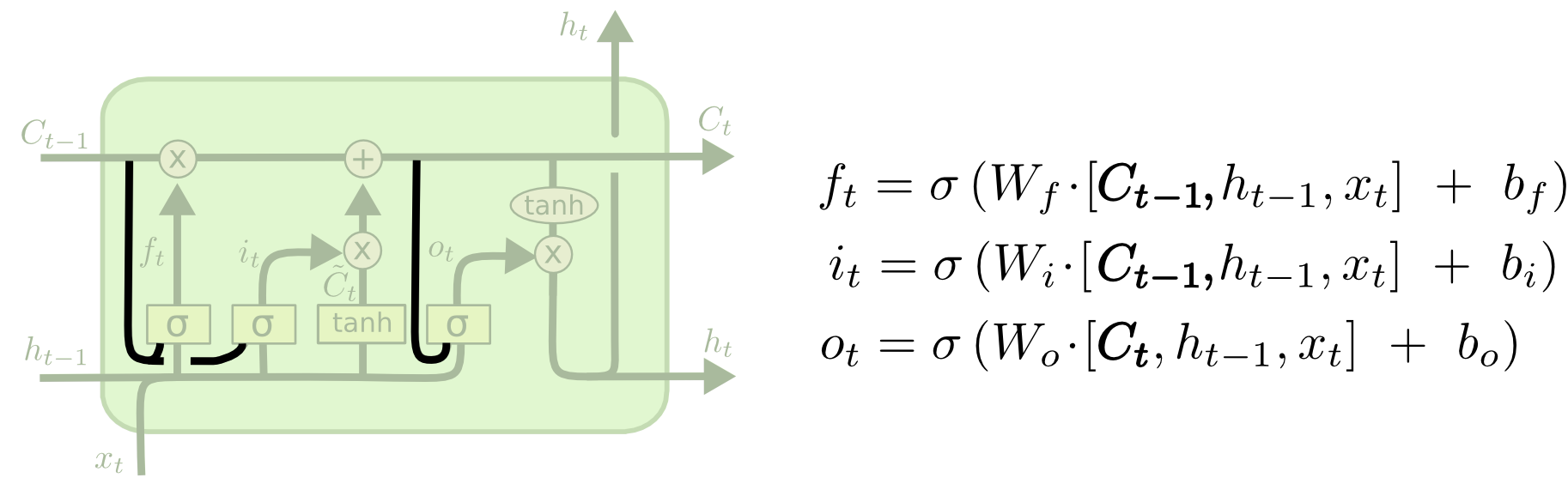

One popular LSTM variant, introduced by Gers & Schmidhuber (2000), is adding “peephole connections.” This means that we let the gate layers look at the cell state.

The above diagram adds peepholes to all the gates, but many papers will give some peepholes and not others.

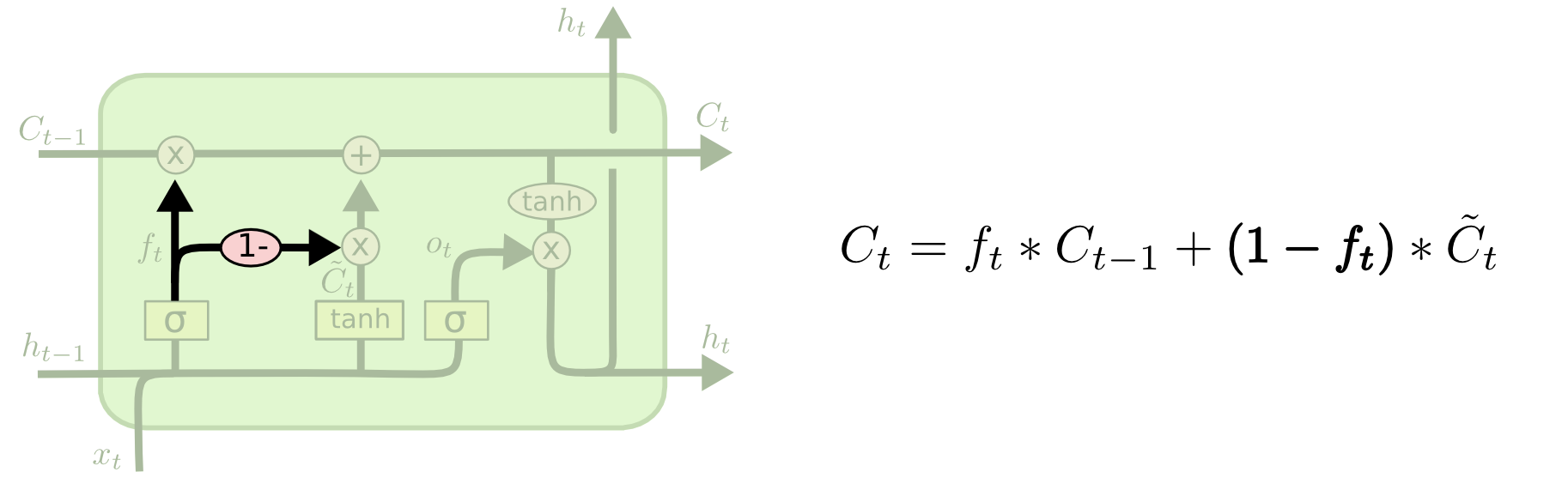

Another variation is to use coupled forget and input gates. Instead of separately deciding what to forget and what we should add new information to, we make those decisions together. We only forget when we’re going to input something in its place. We only input new values to the state when we forget something older.

A slightly more dramatic variation on the LSTM is the Gated Recurrent Unit, or GRU, introduced by Cho, et al. (2014). It combines the forget and input gates into a single “update gate.” It also merges the cell state and hidden state, and makes some other changes. The resulting model is simpler than standard LSTM models, and has been growing increasingly popular.

These are only a few of the most notable LSTM variants. There are lots of others, like Depth Gated RNNs by Yao, et al. (2015). There’s also some completely different approach to tackling long-term dependencies, like Clockwork RNNs by Koutnik, et al. (2014).

Which of these variants is best? Do the differences matter? Greff, et al. (2015) do a nice comparison of popular variants, finding that they’re all about the same. Jozefowicz, et al. (2015) tested more than ten thousand RNN architectures, finding some that worked better than LSTMs on certain tasks.

Global attention

在机器语言翻译任务中我们认为,翻译出的任何一个位置上的输出都与原文的上下文有着或多或少的联系,global attention在输出每一个翻译后单词时都会将所有的原文信息乘以权重后纳入考虑范围,而attention(注意力)的含义就是要为每一个位置的输出学习获得不同的权重向量。

Global attention 首先以叠加LSTM顶层的隐藏状态ht作为输入。然后,我们的目标是得到一个上下文向量ct,该ct捕获相关的源端信息,以帮助预测当前的目标词yt。上图中at向量就是attention的核心他表示了t时刻的输出与输入的整个语句中各个单词的关系权重。

Local attention

评论

发表评论